Here are a few commands that will trigger a few task instances. You can read more in Production Deployment. Architecturally, Airflow has its own server and worker nodes, and Airflow will operate as an independent service that sits outside of your Domino deployment. You to get up and running quickly and take a tour of the UI and theĪs you grow and deploy Airflow to production, you will also want to move awayįrom the standalone command we use here to running the components Learn more about metadata ingestion with DataHub in the docs. While each component does not require all, some configurations need to be same otherwise they would not work as expected.

Use the same configuration across all the Airflow components. Out of the box, Airflow uses a SQLite database, which you should outgrowįairly quickly since no parallelization is possible using this databaseīackend. Ingestion can be automated using our Airflow integration or another scheduler of choice. This page contains the list of all the available Airflow configurations that you can set in airflow.cfg file or using environment variables. In $AIRFLOW_HOME/airflow-webserver.pid or in /run/airflow/webserver.pid Check the datastore connection: sqlalchemyconn in airflow configuration file (airflow.cfg) If there is no issue with the above two things. There could be an issue with the API token used in connection.To check the connection Id, Airflow Webserver -> Admin -> Connections. The PID file for the webserver will be stored Check the connection id used in task/Qubole operator. You can inspect the file either in $AIRFLOW_HOME/airflow.cfg, or through the UI in Export the purged records from the archive tables. You can override defaults using environment variables, see Configuration Reference. These how-to guides will step you through common tasks in using and configuring an Airflow environment. Upon running these commands, Airflow will create the $AIRFLOW_HOME folderĪnd create the “airflow.cfg” file with defaults that will get you going fast. Enable the example_bash_operator DAG in the home page. Visit localhost:8080 in your browser and log in with the admin account details shown in the terminal. This step of setting the environment variable should be done before installing Airflow so that the installation process knows where to store the necessary files. The AIRFLOW_HOME environment variable is used to inform Airflow of the desired location. Airflow usesĬonstraint files to enable reproducible installation, so using pip and constraint files is recommended.Īirflow requires a home directory, and uses ~/airflow by default, but you can set a different location if you prefer. The installation of Airflow is straightforward if you follow the instructions below. How-to Guides Using the Command Line Interface Using the Command Line Interface This document is meant to give an overview of all common tasks while using the CLI. Them to appropriate format and workflow that your tool requires. If you wish to install Airflow using those tools you should use the constraint files and convert The problem in this PR so it might be that Please switch to pip if you encounter such problems. There are known issues with bazel that might lead to circular dependencies when using it to installĪirflow. Installing via Poetry or pip-tools is not currently supported. Pip - especially when it comes to constraint vs. Pip-tools, they do not share the same workflow as While there have been successes with using other tools like poetry or Now if you go to your web browser at localhost:8080, you will be able to see the Airflow UI loaded with many examples.

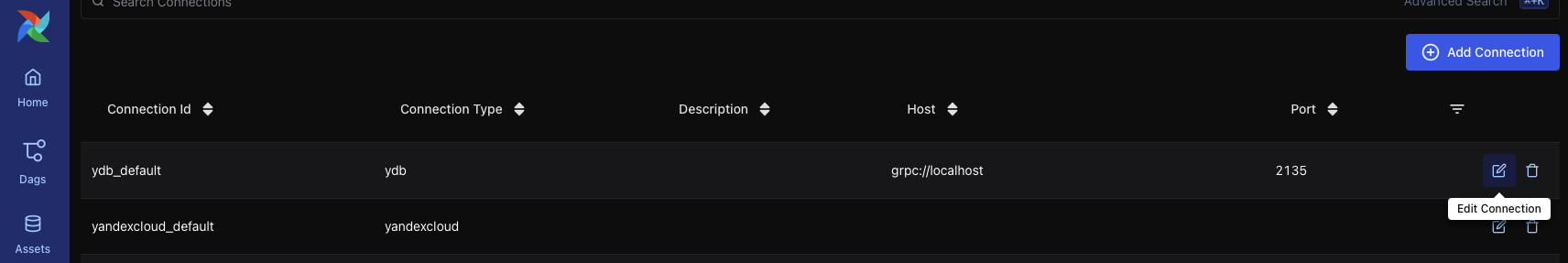

Another option is to persist this temporary data to XCOMs. Start airflow webserver in a new terminal window Activate airflow env if needed conda activate airflow airflow webserver. In PythonOperator or BashOperator tasks, use. You may find some examples in contrib on incubator-airflow or in Airflow-Plugins. Connections store credentials and are used by tasks for secure access to external systems. Only pip installation is currently officially supported. You can retain the connection to the database by building a custom Operator which leverages the PostgresHook to retain a connection to the db while you perform some set of sql operations.

Note that Python 3.11 is not yet supported. Starting with Airflow 2.3.0, Airflow is tested with Python 3.8, 3.9, 3.10. You might be able to add a symlink for it so /bin/airflow points to your actual location, but no need to over-complicate it, at least get it working first.Successful installation requires a Python 3 environment. You can run which airflow to see your actual installed location. It is probably in /usr/local/bin/airflow or somewhere like that. That line is saying to run /bin/airflow, which I am assuming doesn't exist on your machine. Wants=rvice rviceĮxecStart=/bin/airflow scheduler -n $ However, I am not able to set up airflow scheduler service.įollowing is my airflow scheduler service code. I run airflow scheduler command, it is working.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed